Find it here.

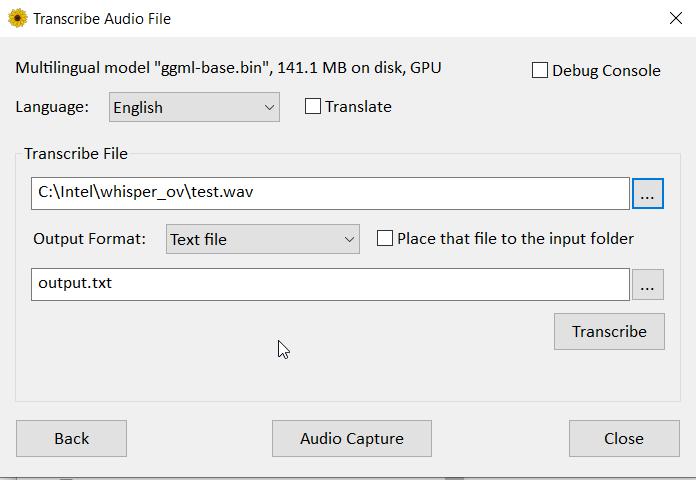

This version will use your gpu and is about 3 to 4 times faster.

Although quality is slightly lower compared to CPU.

I am getting good results (i.e less hallucinations & loops) thus with the below parameters.

If the idea is to use the transcript to have an IA turn into minutes/summary, it is plenty acceptable.

In your prompt then, mention the transcript has been generated by an IA with possible hallucinations, loops, etc and also provide a bit of context (it was a meeting about X and Z with person/function A and person/function B) : it usually provides very good results.

whisper-cli.exe -m "../ggml-base.bin" -f "../output.wav" -osrt --language fr --max-context 50 --beam-size 3 --temperature 0 --temperature-inc 0.2 --threads 8 --split-on-word